The oracle has opinions

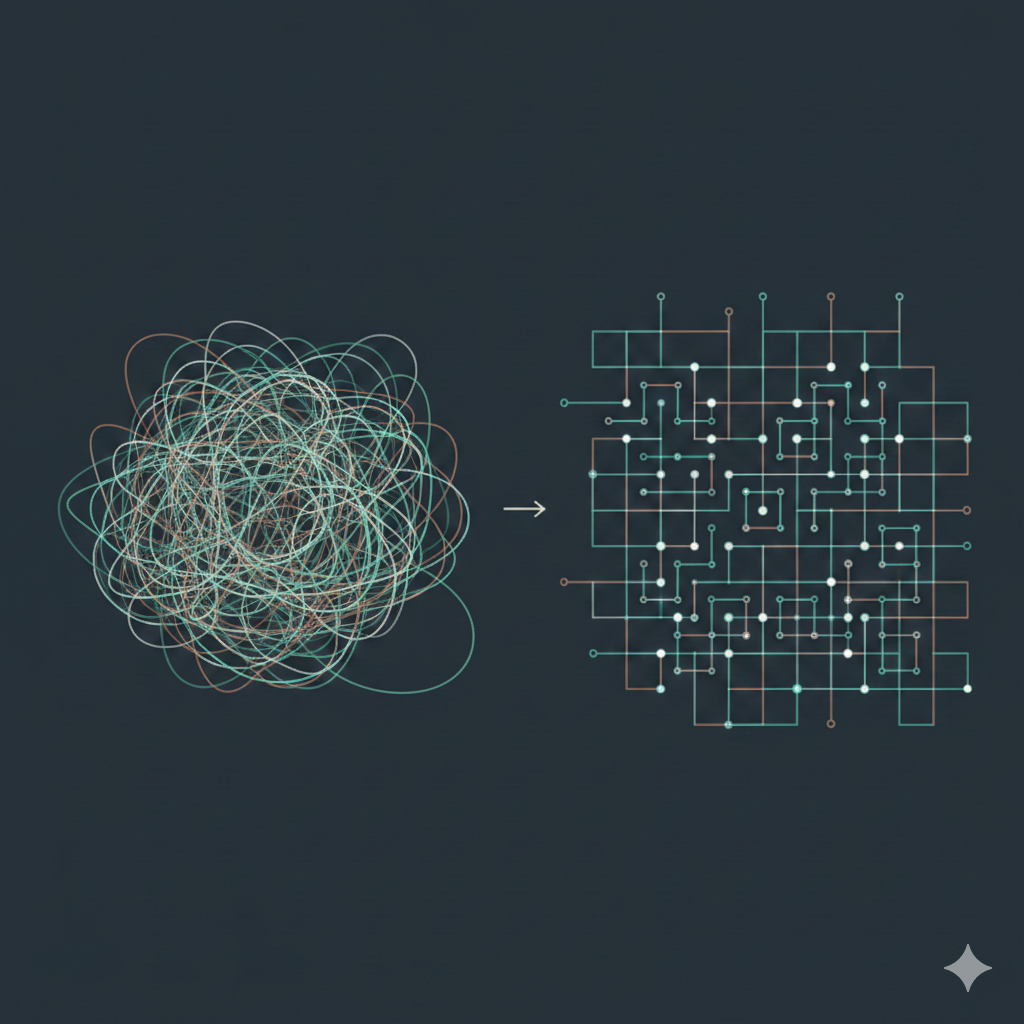

This is part of a series where I write about what I’m learning in an AI evals and analytics course. Earlier posts cover the basics of evals, sniff tests, and quantitative evaluation with LLM-as-a-judge. In traditional testing, the oracle is trustworthy. You know what the right answer is, or at least you know who decides. […]

The oracle has opinions Read More »